Adding Models

This section explains the steps to add OpenAI models and configure the required access controls.Navigate to OpenAI Models in AI Gateway

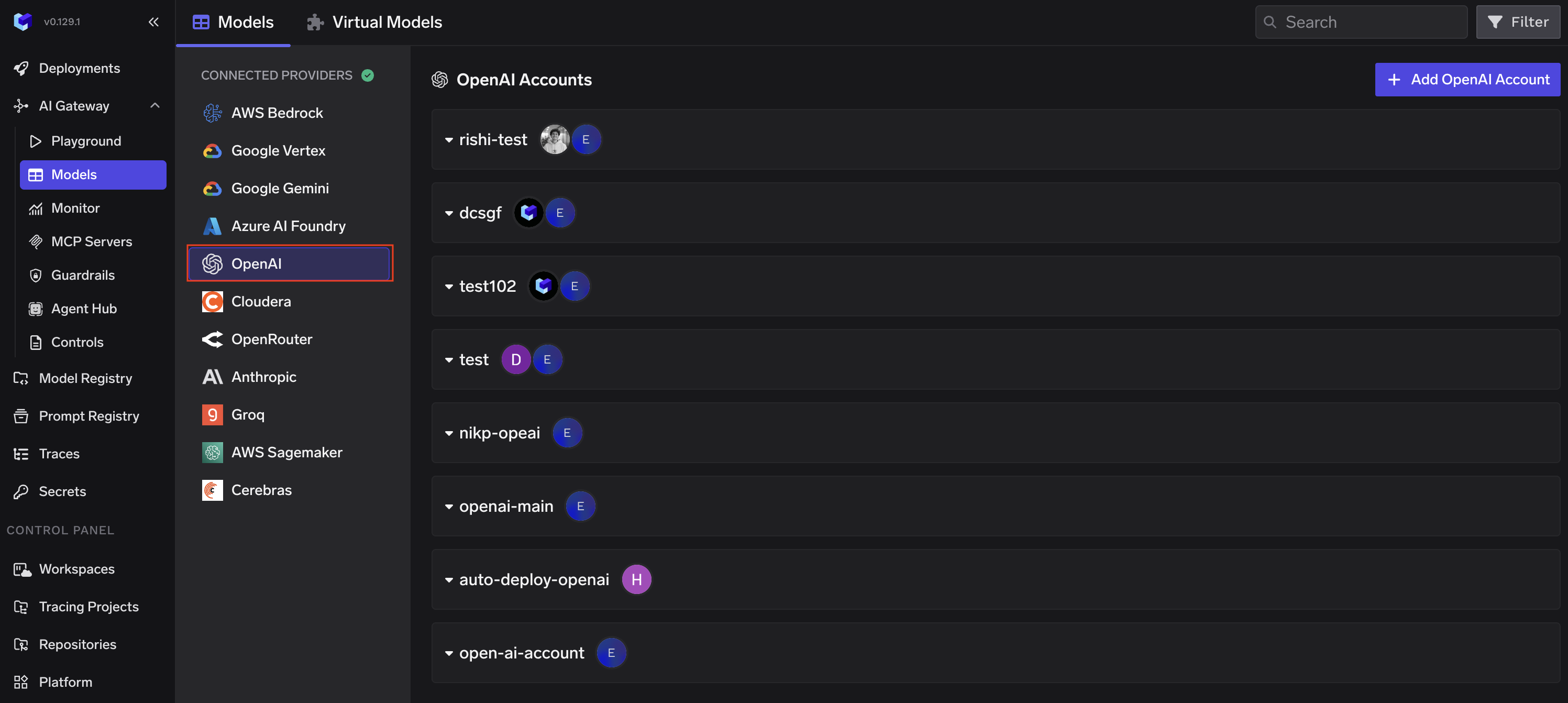

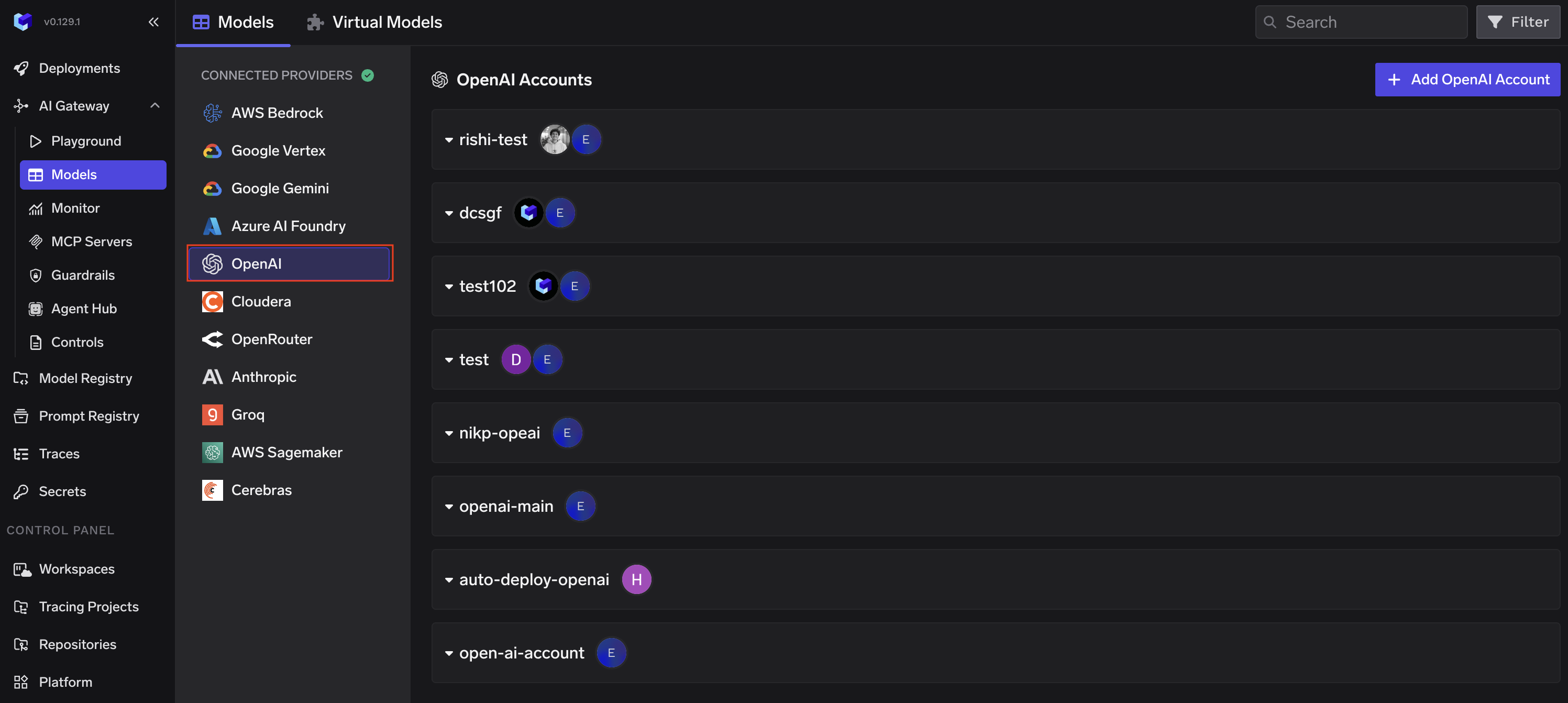

From the TrueFoundry dashboard, navigate to AI Gateway > Models and select OpenAI.

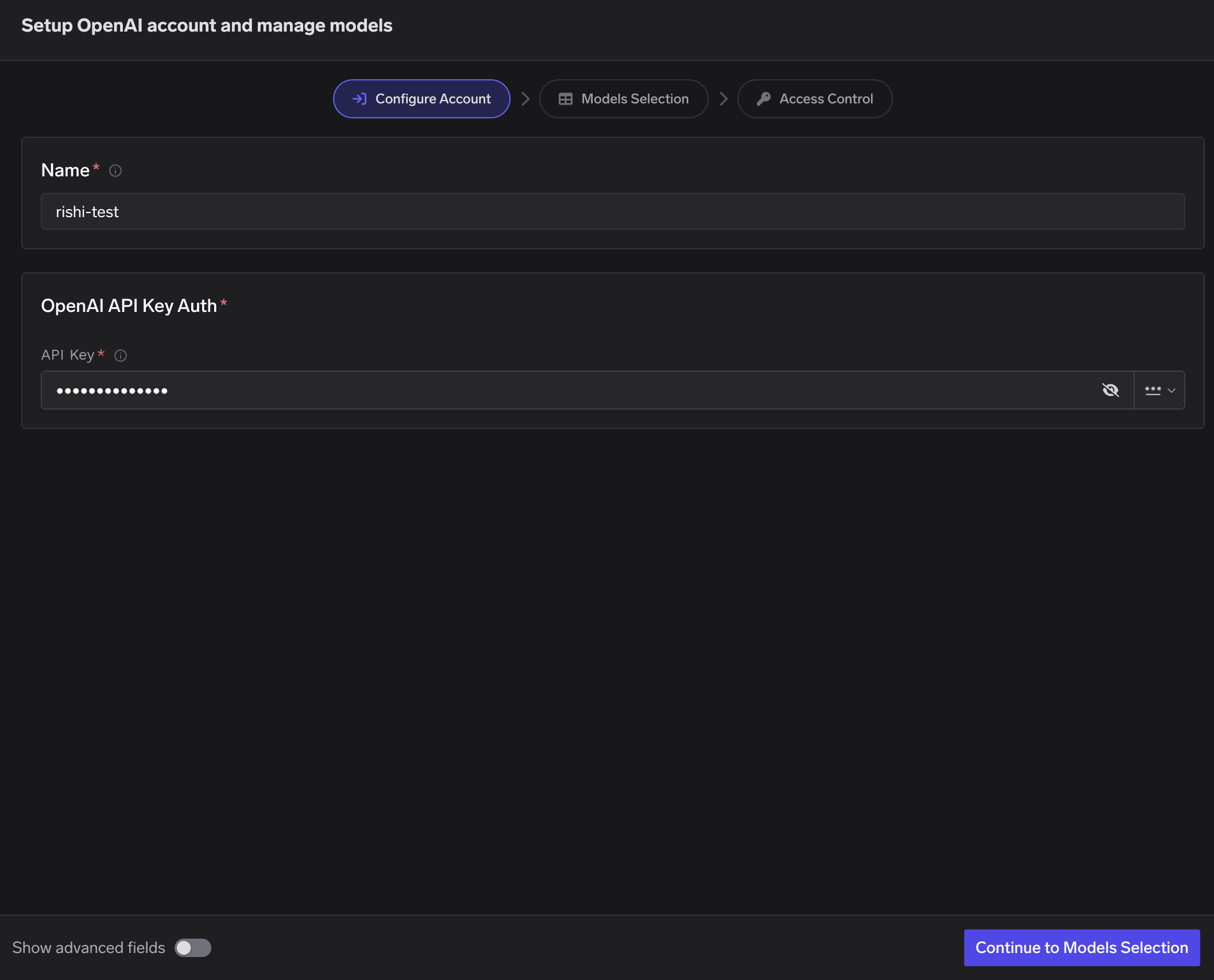

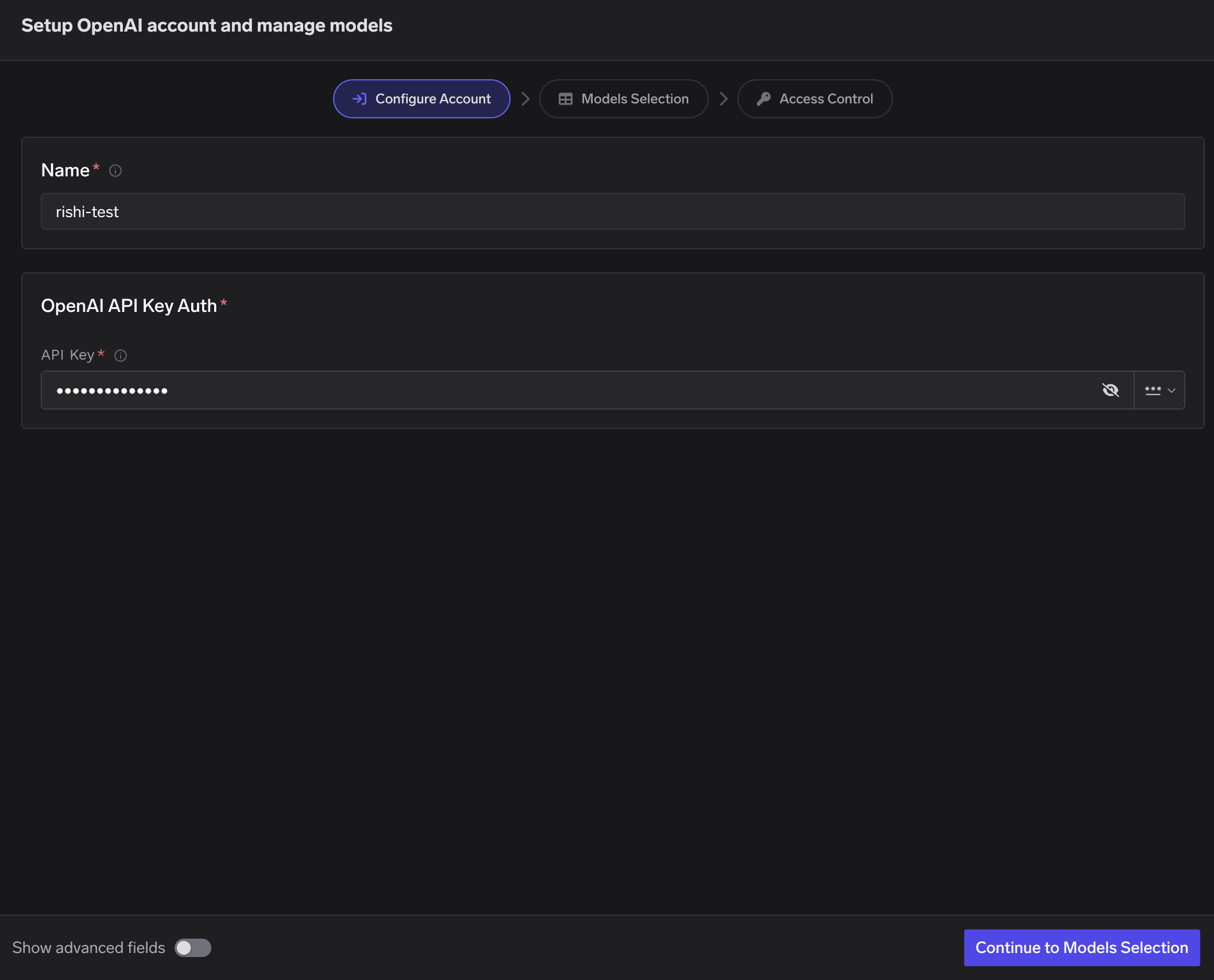

Configure Account

Click Add OpenAI Account. Give a unique name to your OpenAI account and provide your API Key.

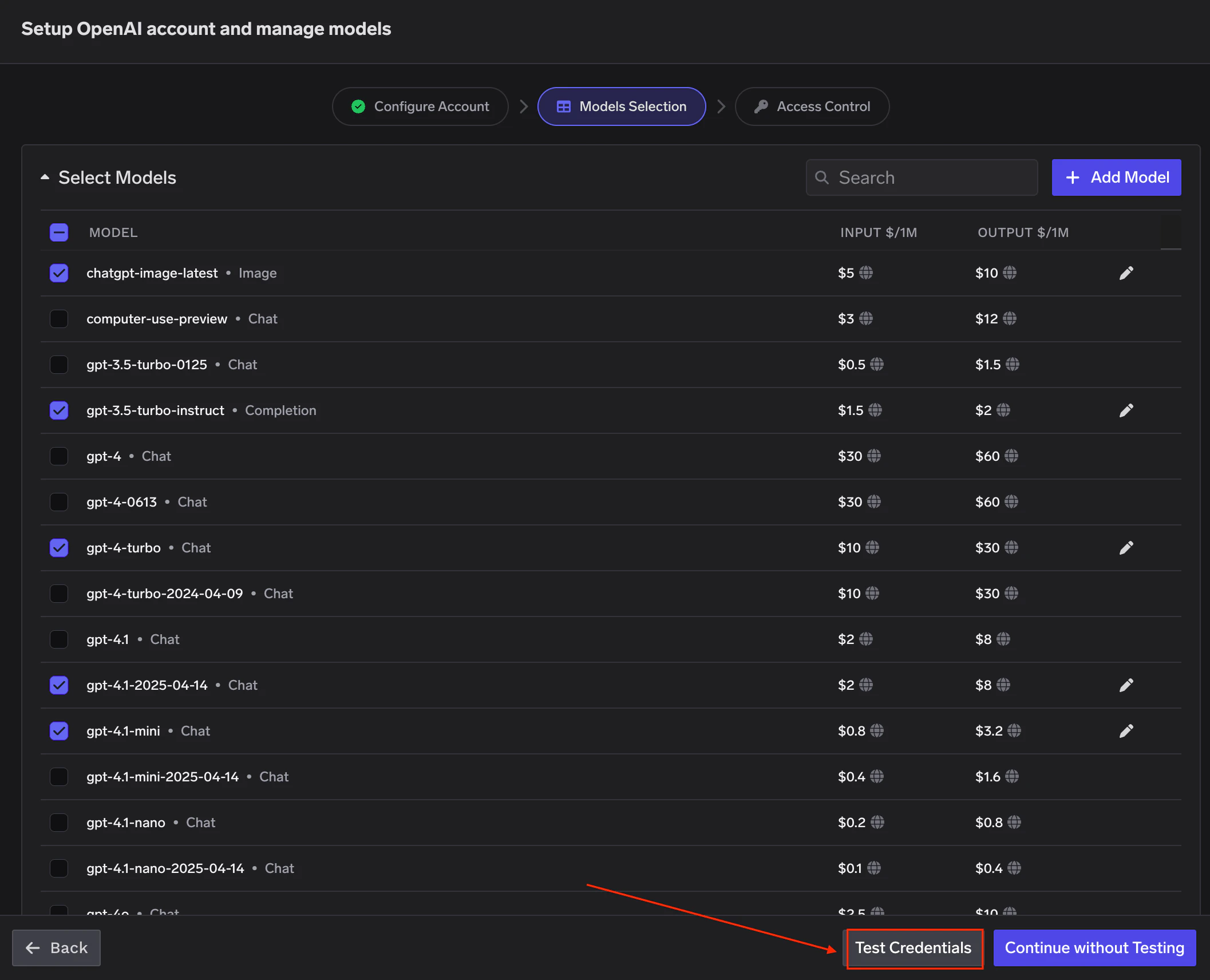

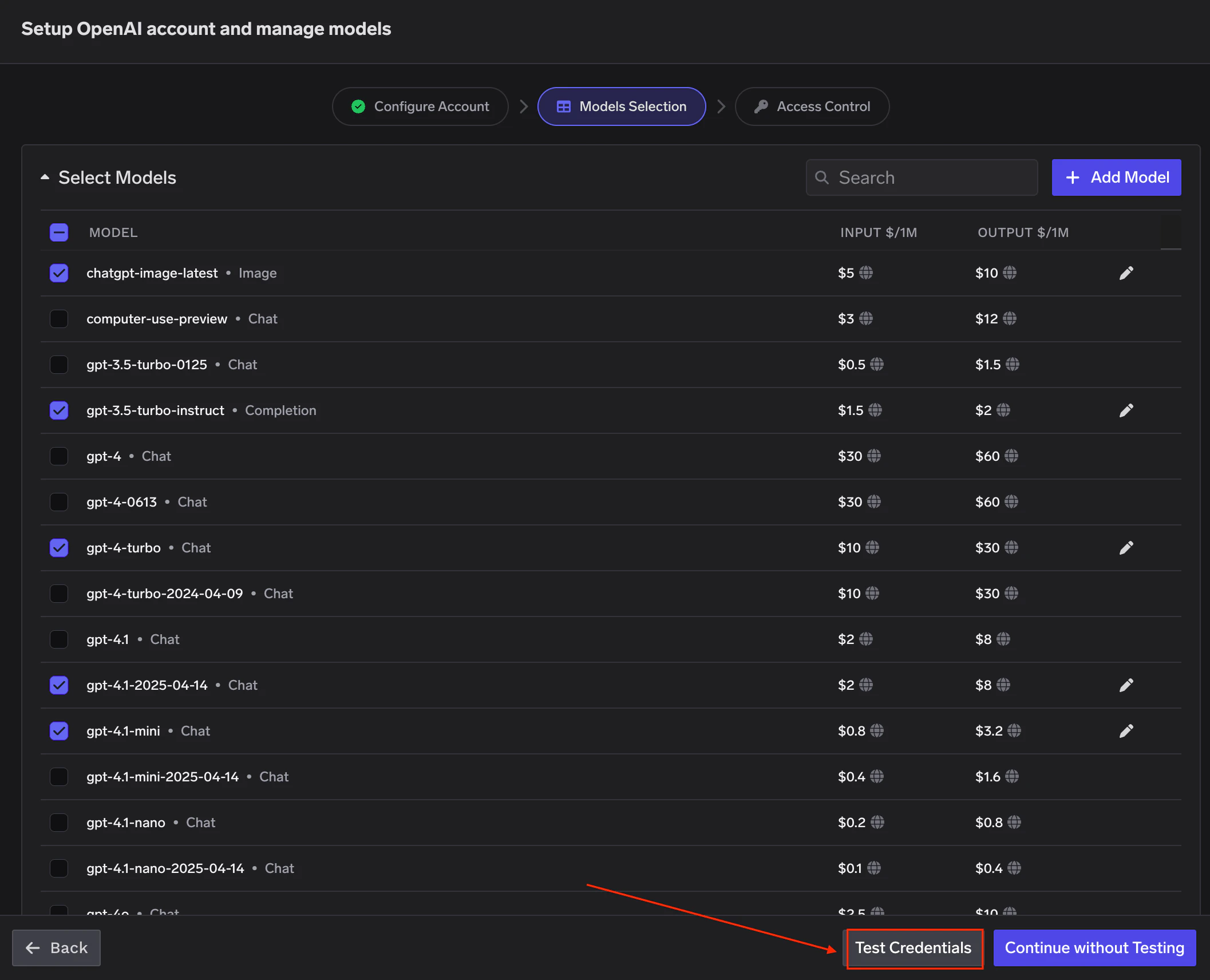

Select Models

Select the models you want to enable from the list. Pricing per million tokens is shown for each model.

If the model you are looking for is not listed, click + Add Model at the bottom to add it manually.

TrueFoundry AI Gateway supports all text and image models in OpenAI. The complete list of models supported by OpenAI can be found here.

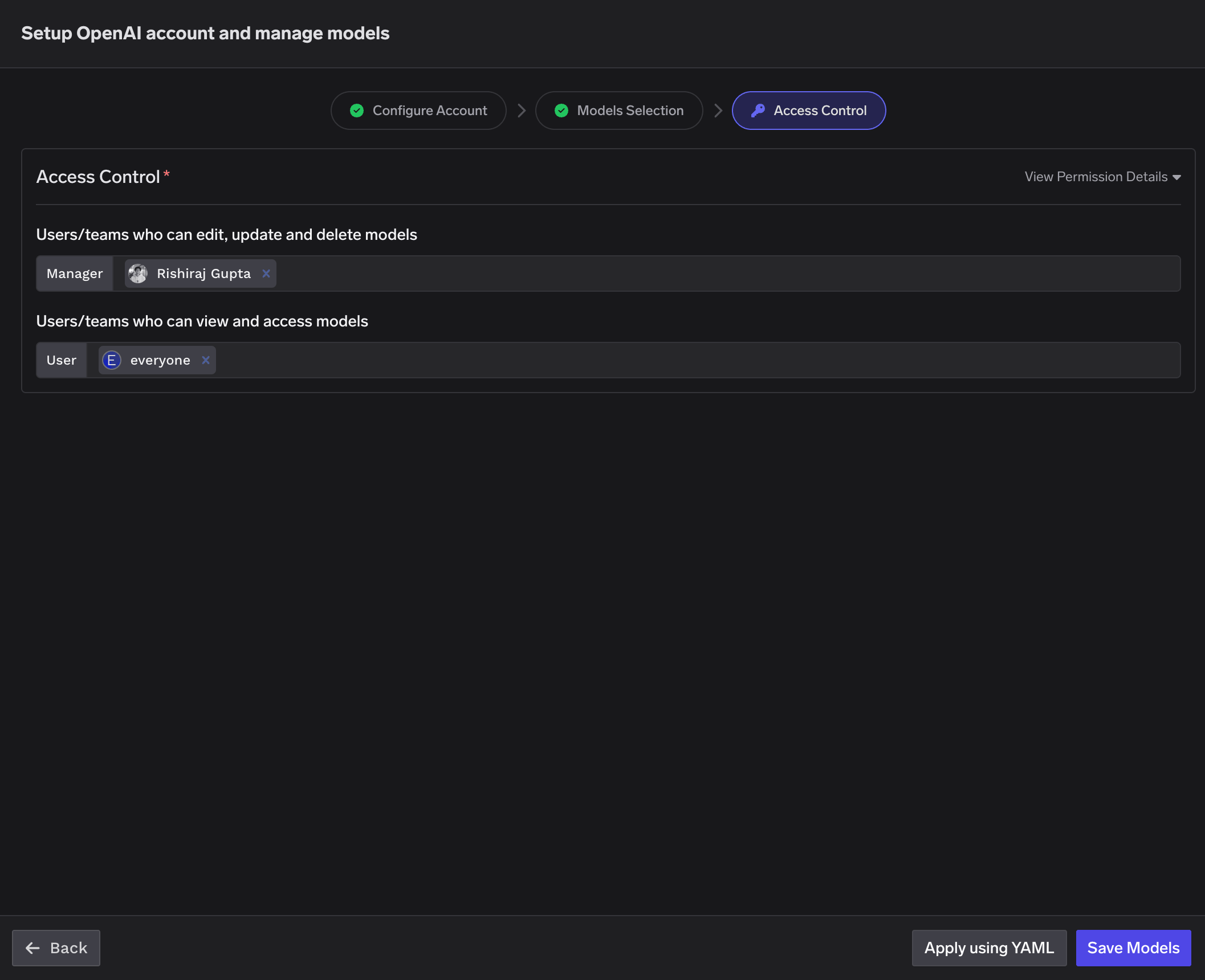

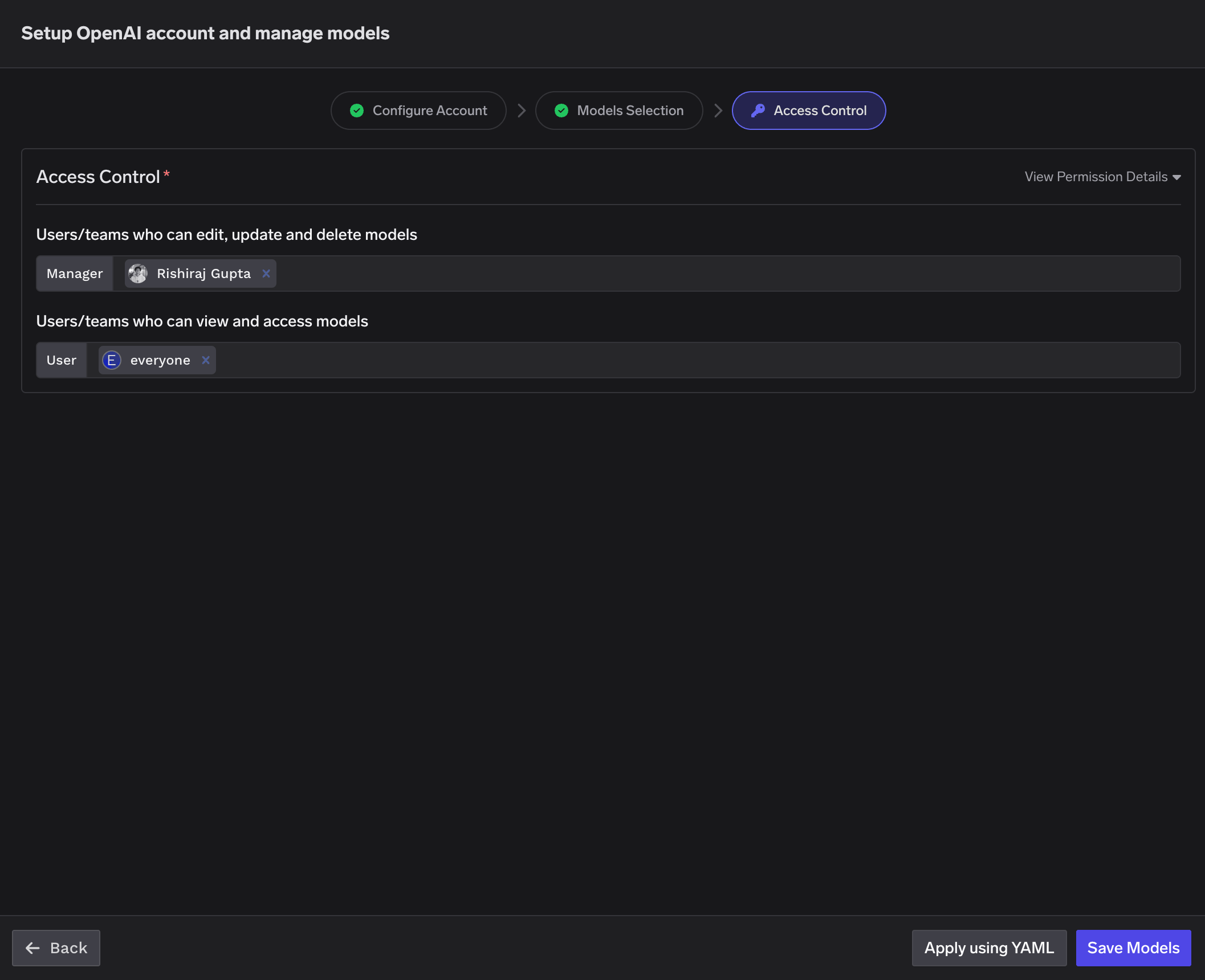

Set Access Control

Configure who can manage and access the models in this provider account. Learn more about access control here.

Inference

After adding the models, you can perform inference using an OpenAI-compatible API via the Playground or by integrating with your own application.

Supported APIs

Once your OpenAI provider account is configured, the following API surfaces are available through the gateway. The table below summarizes each endpoint alongside platform feature support (tracing, cost tracking).Legend:

- ✅ Supported by Provider and Truefoundry

- Supported by Provider, but not by Truefoundry

- Provider does not support this feature

| API | Endpoint | Tracing | Cost Tracking |

|---|---|---|---|

| Chat Completions | /chat/completions | ✅ | ✅ |

| Embeddings | /embeddings | ✅ | ✅ |

| Responses API | /responses | ✅ | ✅ |

| Image Generation | /images/generations | ✅ | |

| Image Edit | /images/edits | ✅ | |

| Image Variation | /images/variations | ✅ | |

| Text-to-Speech | /audio/speech | ✅ | |

| Speech-to-Text | /audio/transcriptions | ✅ | |

| Audio Translation | /audio/translations | ✅ | |

| Batch API | /batches | ✅ | |

| Files API | /files | ✅ | |

| Moderation | /moderations | ✅ | Free API |

| Fine-tuning | /fine_tuning/jobs | ✅ | |

| Realtime API | /live/{provider-account} | ✅ | ✅ |

Chat Completions

Chat Completions

The chat completions endpoint is the most widely used — it supports streaming, function calling, multimodal input (images, audio, PDF), structured JSON outputs, reasoning models, and prompt caching. Full provider capability matrix: Chat Completions API

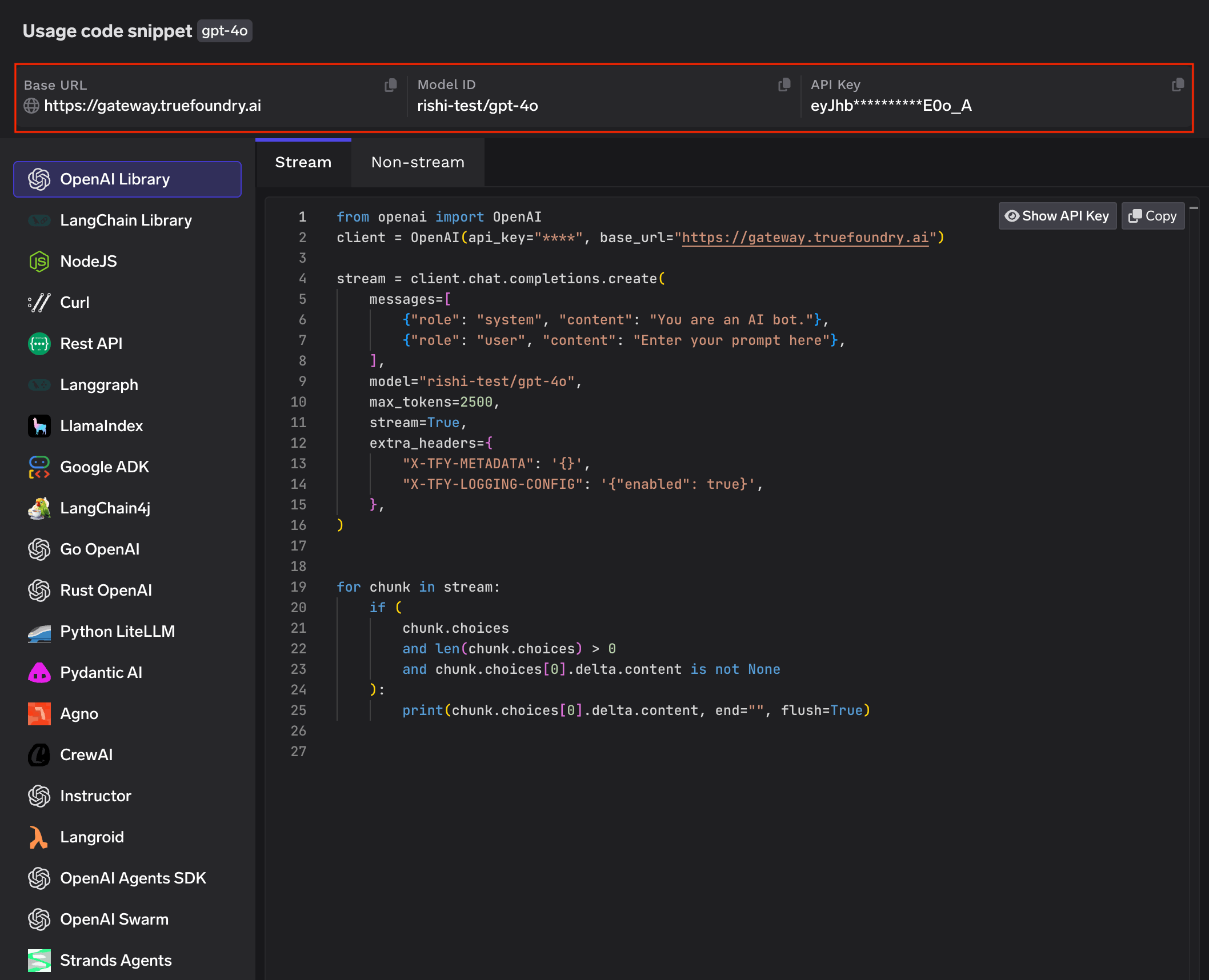

Python

Streaming

Streaming

Set

stream=True to start streaming responses and iterate over delta chunks. You may defensively check that chunk.choices is non-empty and delta.content is not None as some provider chunks (role deltas, finish markers) have no content.Python

Request parameters

Request parameters

Request parameters like

temperature, max_tokens, top_p, frequency_penalty, presence_penalty, and stop fine-tune generation behaviour.Some models don’t support all parameters — e.g.

temperature is not supported on o-series reasoning models.Python

Function calling / tools

Function calling / tools

Advertise a tool, hand the model’s

tool_calls back as a tool role message, then request the final response. Use tool_choice to force the model to call a specific tool when you need deterministic behaviour, and defensively unwrap tool_calls since the model may still return content instead of a tool call on some prompts.Python

Vision (multimodal input)

Vision (multimodal input)

Send images as part of a message via the

image_url content part. The URL can be a public HTTP URL or an inline data:image/...;base64,... URI.Python

PDF document input

PDF document input

Send PDF documents as part of a message via the

file content type with base64 encoding.Python

Response format (structured outputs)

Response format (structured outputs)

Control the output format with

response_format. Two modes:- JSON object —

{"type": "json_object"}— valid JSON, no schema. Include “respond in JSON” in the prompt. - JSON schema —

{"type": "json_schema", ...}— strict schema conformance. Set all properties inrequired.

Python

Reasoning models (o-series)

Reasoning models (o-series)

Reasoning models (o3, o4-mini, etc.) expose a separate pool of reasoning tokens that show up in

response.usage. Some request parameters like temperature are not supported on these models.Python

Prompt caching

Prompt caching

OpenAI supports automatic prompt caching for

gpt-4o and newer models. Pass an optional prompt_cache_key parameter to improve cache hit rates when requests share common prefixes. Cached tokens appear in usage.prompt_tokens_details.cached_tokens.Python

Embeddings

Embeddings

The embeddings endpoint accepts a single string or a list of strings and returns dense vectors suitable for semantic search, clustering, or RAG. Full docs: Embed API.

Python

Supported models:

text-embedding-3-small, text-embedding-3-large, text-embedding-ada-002. See the OpenAI embeddings guide for dimension and pricing details.Responses API

Responses API

OpenAI’s Responses API is a stateful alternative to chat completions that manages conversation state on the server and supports

retrieve, delete, and multimodal inputs. Full docs: Responses API.Python

Image Generation

Image Generation

Generate images with DALL·E 2/3 or GPT-Image via

client.images.generate. The response contains either b64_json or url depending on the model and request parameters — handle both. Full docs: Image Generation.Python

Supported models:

gpt-image-1, dall-e-2, dall-e-3.Image Edit

Image Edit

Edit an existing image with a text prompt via

client.images.edit. Same models as image generation, with size constraints: gpt-image-1 accepts PNG/WebP/JPG up to 50 MB and up to 16 input images, while dall-e-2 requires a single square PNG ≤ 4 MB. Full docs: Image Edit.Python

Image Variation

Image Variation

Legacy: Modern:

create_variation (dall-e-2 only — deprecated)The original variation API accepted an image and returned creative variations. It required a square PNG ≤ 4 MB and only worked with dall-e-2.

Full docs: Image Variation.Python

images.edit with variation prompt (gpt-image-1)The recommended replacement is to use images.edit with a variation-style prompt. This works with current models and produces similar results.Python

Text-to-Speech

Text-to-Speech

Stream spoken audio from text with OpenAI’s TTS models. Use

with_streaming_response.create(...) to stream the response body directly to a file or an AsyncIterator. Full docs: Text-to-Speech.Python

Supported models:

gpt-4o-mini-tts, tts-1, tts-1-hd. Supported voices include alloy, echo, fable, onyx, nova, shimmer. See the OpenAI TTS guide for the full list of voices and audio formats.Speech-to-Text

Speech-to-Text

Transcribe audio files via

client.audio.transcriptions.create. Full docs: Audio Transcription.Python

Supported models:

whisper-1, gpt-4o-transcribe, gpt-4o-mini-transcribe.Audio Translation

Audio Translation

Translates audio in any source language to English text via

client.audio.translations.create. Same whisper-1 model as transcription; the response is always English. Full docs: Audio Translation.The example below generates a short French TTS sample inline and translates it back to English so the demo is self-contained — text ↔ translated text round-trip with no external audio file.Python

Batch API

Batch API

Process large volumes of requests asynchronously with lower cost and higher throughput than sync inference. The flow is: upload JSONL → create batch → poll for completion → download results. Full docs: Batch Predictions.

Python

Files API

Files API

Upload, list, retrieve, and delete files held by the gateway (used by Batch and Fine-tuning). Full docs: Files API.

Python

Moderation

Moderation

Identify policy-violating content via

client.moderations.create. Routes through the regular client (no x-tfy-provider-name header needed). Full docs: Moderation API.Python

Supported models:

omni-moderation-latest (multi-modal — text + image, recommended) and text-moderation-latest (legacy, text-only).Fine-tuning

Fine-tuning

Submit a fine-tuning job for a chat model. The full lifecycle is: upload a JSONL training file → create job → poll → use the resulting model ID. Full docs: Finetune API.

Python

Realtime API

Realtime API

OpenAI’s Realtime API streams full-duplex audio (and text) over a WebSocket, enabling low-latency voice interactions. On TrueFoundry, the realtime endpoint lives at:Note the two quirks:

- The host is the bare gateway host — no

/api/llmsuffix. - The OpenAI provider-account name is encoded in the URL path, not in the model name. The model passed to

client.realtime.connect(model=...)is the bare upstream OpenAI name (e.g.gpt-4o-realtime-preview).

AsyncOpenAI from openai[realtime], which handles the WebSocket framing and event schema for you. Full docs: Realtime API.Python

For full-duplex audio (mic input + speaker output), use

output_modalities: ["audio"], add an audio.input.turn_detection block, and stream PCM chunks through connection.input_audio_buffer.append. See the OpenAI realtime audio reference for a complete sounddevice-based example — it works against the gateway unchanged, just point websocket_base_url at wss://{host}/live/openai-main.Regional Endpoints

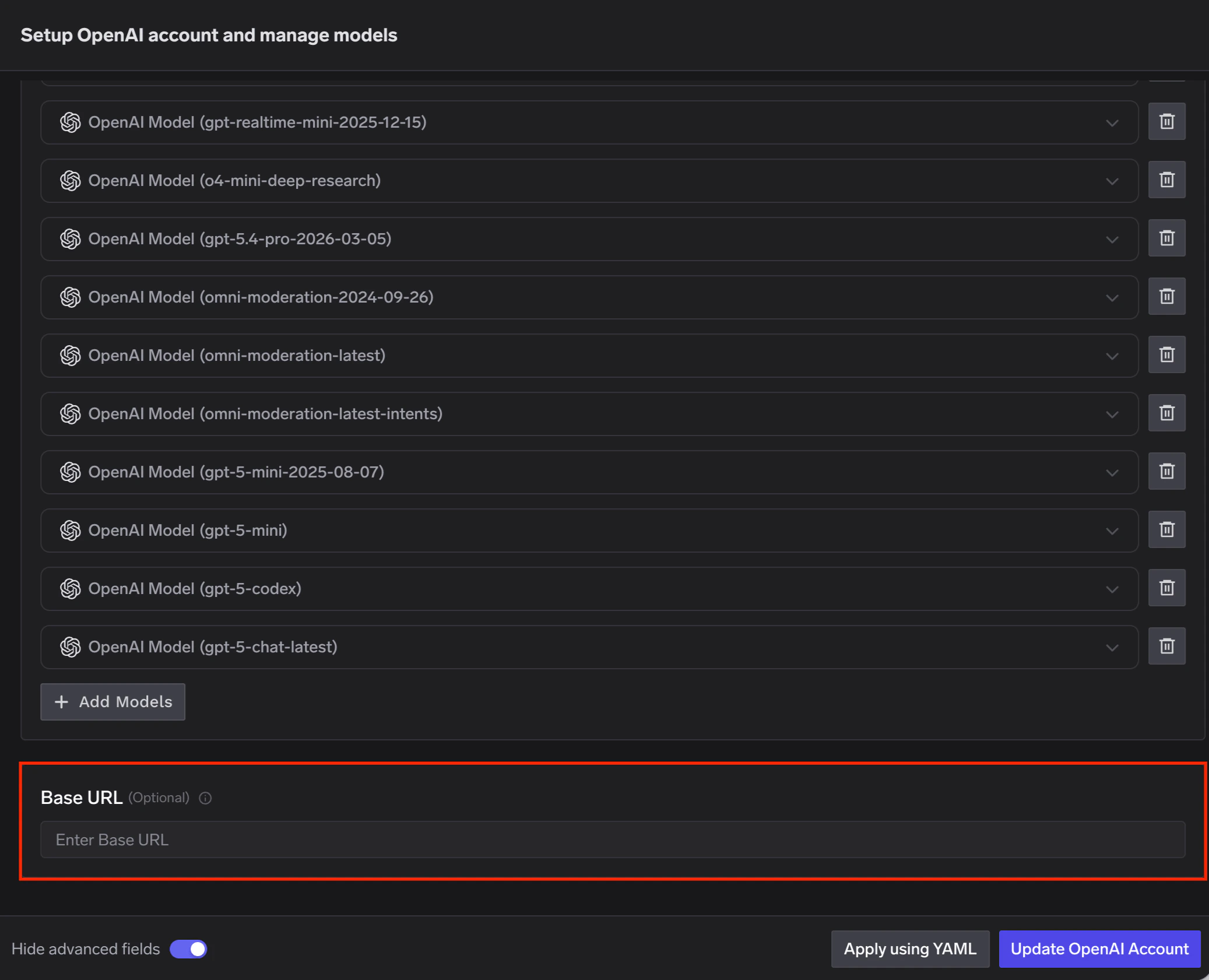

OpenAI offers data residency controls that let you configure the region where your data is stored and, in some regions, processed. When data residency is enabled on your OpenAI account, you must use a region-specific domain prefix for API requests instead of the defaultapi.openai.com.

When adding an OpenAI account in TrueFoundry AI Gateway, set the Base URL to the appropriate regional endpoint for your OpenAI project.

| Region | Domain Prefix | Base URL |

|---|---|---|

| US | us.api.openai.com (required) | https://us.api.openai.com/v1 |

| Europe (EEA + Switzerland) | eu.api.openai.com (required) | https://eu.api.openai.com/v1 |

| Australia | au.api.openai.com (optional) | https://au.api.openai.com/v1 |

| Canada | ca.api.openai.com (optional) | https://ca.api.openai.com/v1 |

| Japan | jp.api.openai.com (optional) | https://jp.api.openai.com/v1 |

| India | in.api.openai.com (optional) | https://in.api.openai.com/v1 |

| Singapore | sg.api.openai.com (optional) | https://sg.api.openai.com/v1 |

| South Korea | kr.api.openai.com (optional) | https://kr.api.openai.com/v1 |

| United Kingdom | gb.api.openai.com (required) | https://gb.api.openai.com/v1 |

| United Arab Emirates | ae.api.openai.com (required) | https://ae.api.openai.com/v1 |

Regions marked as (required) must use the regional domain prefix for all requests. Regions marked as (optional) can use the prefix to improve latency, but it is not mandatory.

Non-US regions require approval for Modified Abuse Monitoring or Zero Data Retention on your OpenAI account. For full details on data residency, supported models, and endpoint limitations, refer to the OpenAI data controls documentation.