/aiguard/v1/guard_chat_completions endpoint and runs inline with every model request, so you get block / redact / audit behaviour directly from the gateway with no adapter service to host.

CrowdStrike AIDR is the evolution of the Pangea AI Guard product (acquired by CrowdStrike in 2025). Existing Pangea users are requested to migrate to this.

What is CrowdStrike AIDR?

CrowdStrike AI Detection & Response (AIDR) is the AI Guard module of CrowdStrike’s AI security suite. It analyses prompts, completions, tool calls, and tool outputs in the OpenAI Chat Completions format and applies the policies you configure in the AIDR console — including malicious prompt detection, PII / secrets redaction, MCP tool validation, language and topic policies, and access rules.Key capabilities

- Security analysis — Detect prompt injection, jailbreaks, malicious tool descriptions, conflicting MCP tool names, and policy violations on both inputs and outputs.

- Content transformation — Mutate flagged content in place (redact PII, encrypt secrets with format-preserving encryption) instead of blocking the whole request, so legitimate traffic keeps flowing.

- Policy-driven enforcement — Each AIDR collector can run a different Input Policy and Output Policy, so you can express “audit only” on inputs but “hard block” on outputs (or any combination) per environment.

- Findings & audit — Every analysed request is logged in the AIDR Findings page with the original input, processed output, and detector verdicts. Correlate AIDR findings back to specific gateway calls using TrueFoundry request logs.

Adding CrowdStrike AIDR to TrueFoundry

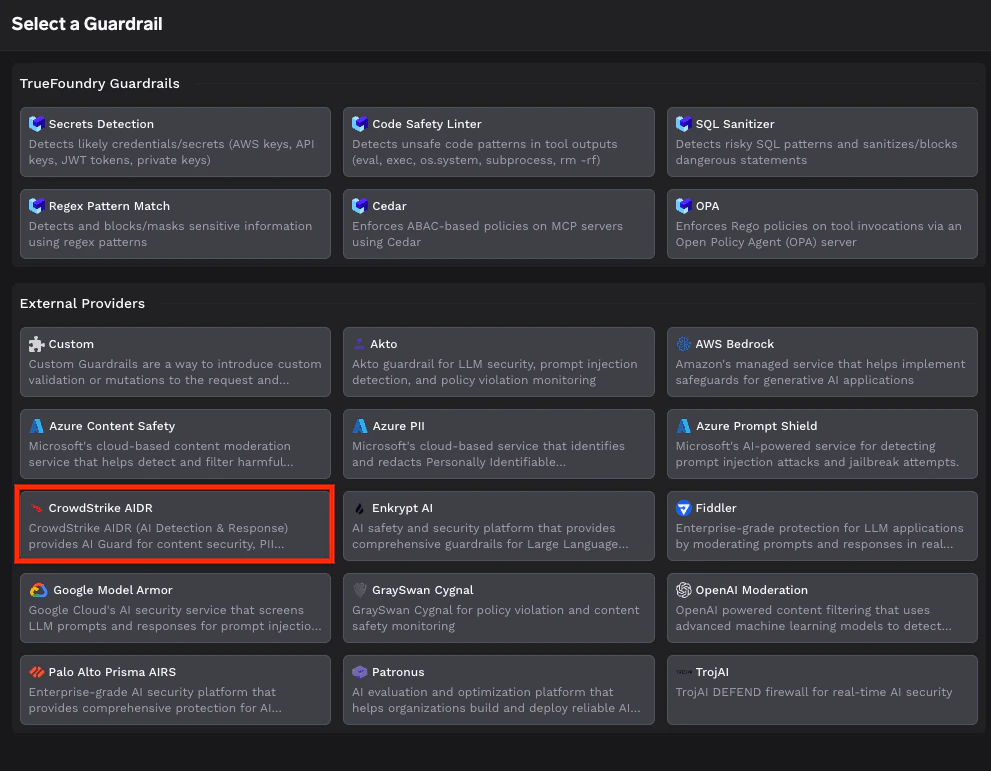

CrowdStrike AIDR is a first-class guardrail in the gateway — you configure it through the same form as any other built-in guardrail, no adapter service required.Pick CrowdStrike AIDR from the guardrail registry

From AI Gateway → Guardrails → Registry, select CrowdStrike AIDR under External Providers. See Get started with guardrails for the end-to-end flow of adding any guardrail.

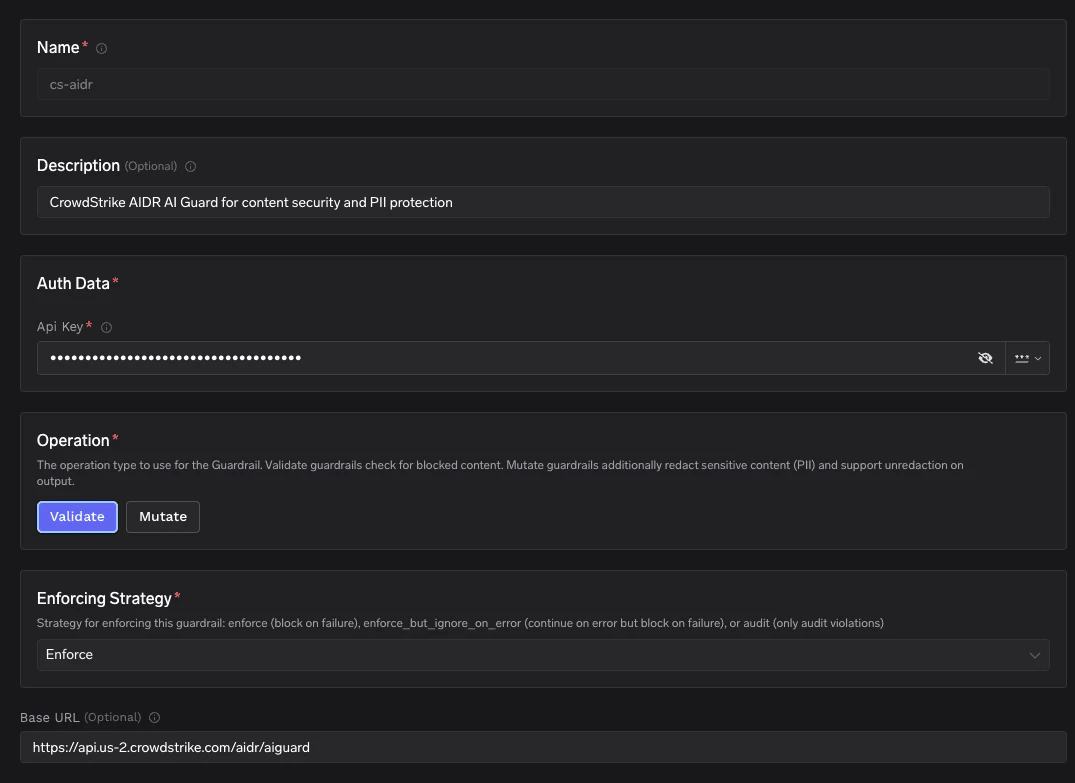

Fill in the CrowdStrike AIDR form

Provide the following fields:

| Field | Required | Description |

|---|---|---|

| Name | ✅ | Identifier for this guardrail (e.g. cs-aidr). Used when you reference the guardrail from rules. |

| Description | Free-form description shown in the dashboard. | |

| Auth Data → API Key | ✅ | Bearer token for your AIDR collector. Generate one from the AIDR console under your Application collector → Tokens. Stored encrypted. |

| Operation | ✅ | Validate checks for blocked content only. Mutate additionally accepts AIDR’s guard_output so PII / secrets can be redacted in place. This field is part of the CrowdStrike AIDR guardrail definition. |

| Base URL | AIDR API base URL. Defaults to https://api.crowdstrike.com/aidr/aiguard. Use a regional endpoint such as https://api.us-2.crowdstrike.com/aidr/aiguard if your AIDR tenant lives in a different region. | |

| Enforcing Strategy | ✅ | TrueFoundry platform setting (not specific to AIDR). Enforce blocks on any violation or guardrail error. Enforce But Ignore On Error blocks on violations but lets the request through on guardrail failures (timeouts, 5xx). Audit never blocks — violations are logged only. The same field is exposed on every guardrail integration. |

Keep the AIDR API key in TrueFoundry only — it should never appear in client code or model request bodies. The gateway attaches it as

Authorization: Bearer <token> on every call to AIDR.Advanced parameters

These are TrueFoundry platform-level guardrail parameters and apply to AIDR the same way they apply to any other guardrail integration:| Parameter | Default | Description |

|---|---|---|

enforce_on_detection | true | When true, the gateway treats any detector firing (detectors.*.detected = true) as a block — even if AIDR’s own policy is in monitor / report mode and returns result.blocked = false. Set to false if you want the gateway to defer entirely to the AIDR policy verdict. |

timeout | gateway default | Maximum time the gateway will wait for an AIDR response before treating the call as a failure. Pair with Enforce But Ignore On Error to fail open on slow AIDR responses. |

Bind the guardrail to models with a rule

Once the guardrail is saved, attach it to one or more models through a Guardrail Rule. Use See Configure Guardrail Rules for the full rule schema (model selectors, user filters, fallback policies).

llm_input_guardrails to scan prompts before they reach the model and llm_output_guardrails to scan completions before they’re returned to the caller.How the gateway calls AIDR

For each model request the gateway converts the chat payload into AIDR’sguard_input shape and calls the configured base URL. You don’t need to write or host any code for this — the section below documents the wire format only as a reference for debugging request logs.

Endpoints used

| Purpose | Method | Path |

|---|---|---|

| Run a guard check on input or output | POST | {baseUrl}/v1/guard_chat_completions |

Restore FPE-redacted values on the output leg of Mutate (when AIDR returned an fpe_context) | POST | {baseUrl}/v1/unredact |

Request shape

The gateway sends a minimalguard_chat_completions payload — only the fields it actually needs to drive AIDR’s verdict:

- Messages are concatenated, not forwarded turn-by-turn. The original chat history (system / user / assistant / tool messages) is collapsed into a single

user-role message containing the joined text. AIDR therefore sees one logical input per call rather than the structured conversation. This is the trade-off the gateway makes to keep the integration simple and provider-agnostic — if you need turn-aware analysis, run AIDR closer to the application using its native SDK. event_typeisinputfor the prompt leg (before the model) andoutputfor the completion leg (after the model). AIDR uses it to pick the matching Input Policy or Output Policy from your collector.- No TrueFoundry routing metadata is sent. Fields like

app_id,user_id,model,tools, orspan_idfrom the AIDR API spec are not populated by the gateway today. AIDR Findings will show the request without app/user/model attribution — correlate back through TrueFoundry request logs instead. - FPE round-trip on output. When

Operation = Mutateis enabled and the input leg redacted values with format-preserving encryption, the gateway passes the resultingfpe_contextback to AIDR on the output leg asinput_fpe_contextso the same encryption keys are used end-to-end.

Response shape and how the gateway interprets it

enforce_on_detection flag.

When the gateway considers the call a “violation”

A violation is raised when either of these is true:result.blocked == true(AIDR’s own policy decided to block), or- any detector under

result.detectorshasdetected == trueandenforce_on_detectionistrue(the default). This catches cases where AIDR is intentionally running in monitor mode (blocked = false) but you still want the gateway to enforce.

Outcome matrix

| AIDR response | Operation = Validate | Operation = Mutate |

|---|---|---|

| Violation (per the rule above) | Gateway returns HTTP 400 with AIDR’s summary in the error body. | Same as Validate — once a violation is raised, Mutate does not soften it into a redaction. |

blocked = false, transformed = true, no detector flagged (or enforce_on_detection = false) | Detection (if any) logged. Original payload is forwarded unchanged — Validate never rewrites content. | Input leg: result.guard_output (with PII / secrets redacted in place) is what the model sees — the caller’s original text is replaced before it leaves the gateway. Output leg: result.guard_output is what the caller sees, and any FPE-encrypted values are decrypted via /v1/unredact (using the fpe_context returned by AIDR on the input leg) before the response is returned. |

blocked = false, transformed = false, no detector flagged | Forwarded unchanged. | Forwarded unchanged. |

How Enforcing Strategy layers on top

The matrix above describes “what counts as a violation”. Enforcing Strategy then decides what the gateway does about violations and about its own errors talking to AIDR:- Enforce — violations block, AIDR errors block. Fail closed.

- Enforce But Ignore On Error — violations block, AIDR errors (timeouts, 5xx, parsing failures) are logged but the request is forwarded. Fail open on infra issues, fail closed on policy.

- Audit — nothing blocks. Every violation is logged for review only. Use this while tuning AIDR policies.

AIDR can return an

HTTP 202 with a result.location polling URL on very large payloads. The TrueFoundry gateway currently issues a single synchronous call and treats a non-Success status as a failure — so 202s will be handled by the Enforcing Strategy error path rather than polled. If you regularly process 1 MiB+ payloads, increase the guardrail timeout and consider running with Enforce But Ignore On Error so the occasional async response doesn’t block traffic.Validation logic at a glance

- HTTP 400 from the gateway → either AIDR returned

result.blocked = true, orenforce_on_detection = trueand at least one detector flagged the request. Open the Request Logs page in TrueFoundry and expand the guardrail span to see the full AIDRsummary,detectors, andpolicythat triggered the block. - HTTP 401 / 403 from AIDR → API key is invalid or doesn’t have permission for the configured collector. Re-check the Auth Data field on the guardrail.

- HTTP 5xx or timeout from AIDR → with Enforce the request fails closed (HTTP 400). With Enforce But Ignore On Error the request is allowed through and the failure is logged.

Reference

- CrowdStrike AIDR API reference —

guard_chat_completions,unredact, request/response shapes - CrowdStrike AIDR Findings page — where AIDR logs every analysed request

- TrueFoundry guardrails overview

- Configure guardrail rules