GPT-5.3-Codex is now available! You can access GPT-5.3-Codex through TrueFoundry AI Gateway. Follow the setup steps below to get started.

What is Codex?

Codex is the official command-line interface (CLI) tool for OpenAI, providing a streamlined way to interact with OpenAI’s language models directly from your terminal. With Truefoundry LLM Gateway integration, you can route your Codex requests via Gateway.Key Features of OpenAI Codex CLI

- Terminal-Native AI Interactions: Chat with AI models directly from your terminal without switching contexts

- Intelligent Code Generation: Generate code snippets, functions, and programs across multiple programming languages using natural language prompts

- Streaming and Interactive Sessions: Real-time streaming responses enable dynamic, conversation-like interactions for code development

Prerequisites

Before integrating Codex with TrueFoundry, ensure you have:- TrueFoundry Account: Create a Truefoundry account with at least one model provider and generate a Personal Access Token by following the instructions in Generating Tokens. For a quick setup guide, see our Gateway Quick Start

- Codex Installation: Install the Codex CLI on your system

- Virtual Model: Create a Virtual Model for each Codex model you want to use (see Create a Virtual Model below)

Why You Need a Virtual Model

Codex has internal logic that sends thinking tokens to certain models during processing. This works correctly with standard OpenAI-style model names (likegpt-5), but causes compatibility issues with TrueFoundry’s fully qualified model names (like openai-main/gpt-5 or azure-openai/gpt-5). When Codex sees a fully qualified name directly, it can send thinking tokens incorrectly, which leads to unexpected behavior.

Virtual Models fix this by letting you:

- Use a slug as the model name in Codex (e.g.

gpt-5orgpt-5.2-codex) so thinking tokens work as intended. - Have the TrueFoundry Gateway map that slug to the fully qualified target (e.g.

openai-main/gpt-5) and route requests there.

Setup Process

1. Configure Codex

Connect Codex with the TrueFoundry LLM Gateway using either environment variables or aconfig.toml file.

- Environment variables

- Config file (~/.codex/config.toml)

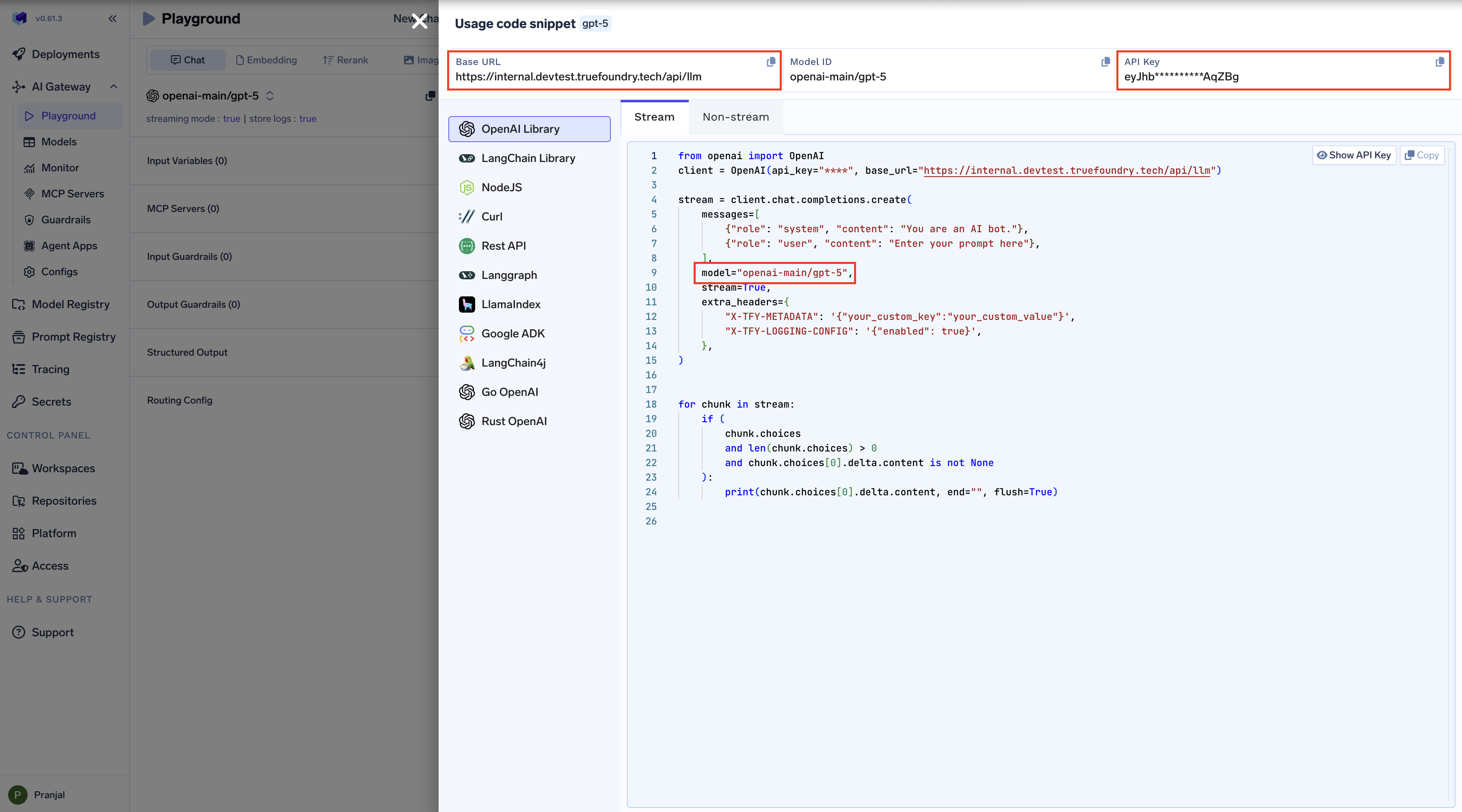

To connect Codex with Truefoundry LLM Gateway, set these environment variables:Replace

TFY_API_KEY with your actual TrueFoundry API key and {GATEWAY_BASE_URL} with your TrueFoundry AI Gateway Base URL (how to find it).Tip: Add these lines to your shell profile (

.bashrc, .zshrc, etc.) to make the configuration persistent across terminal sessions.

2. Create a Virtual Model

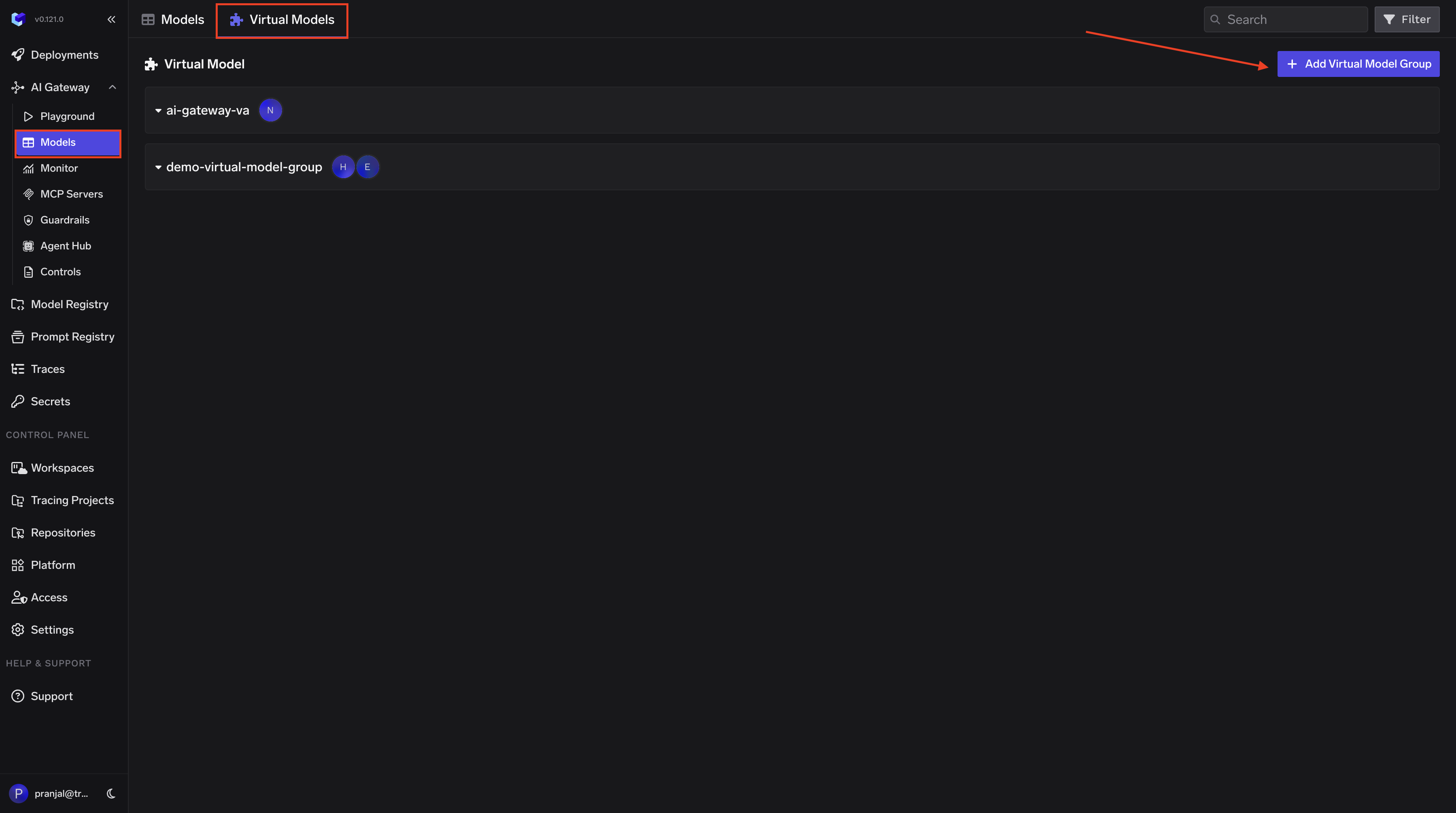

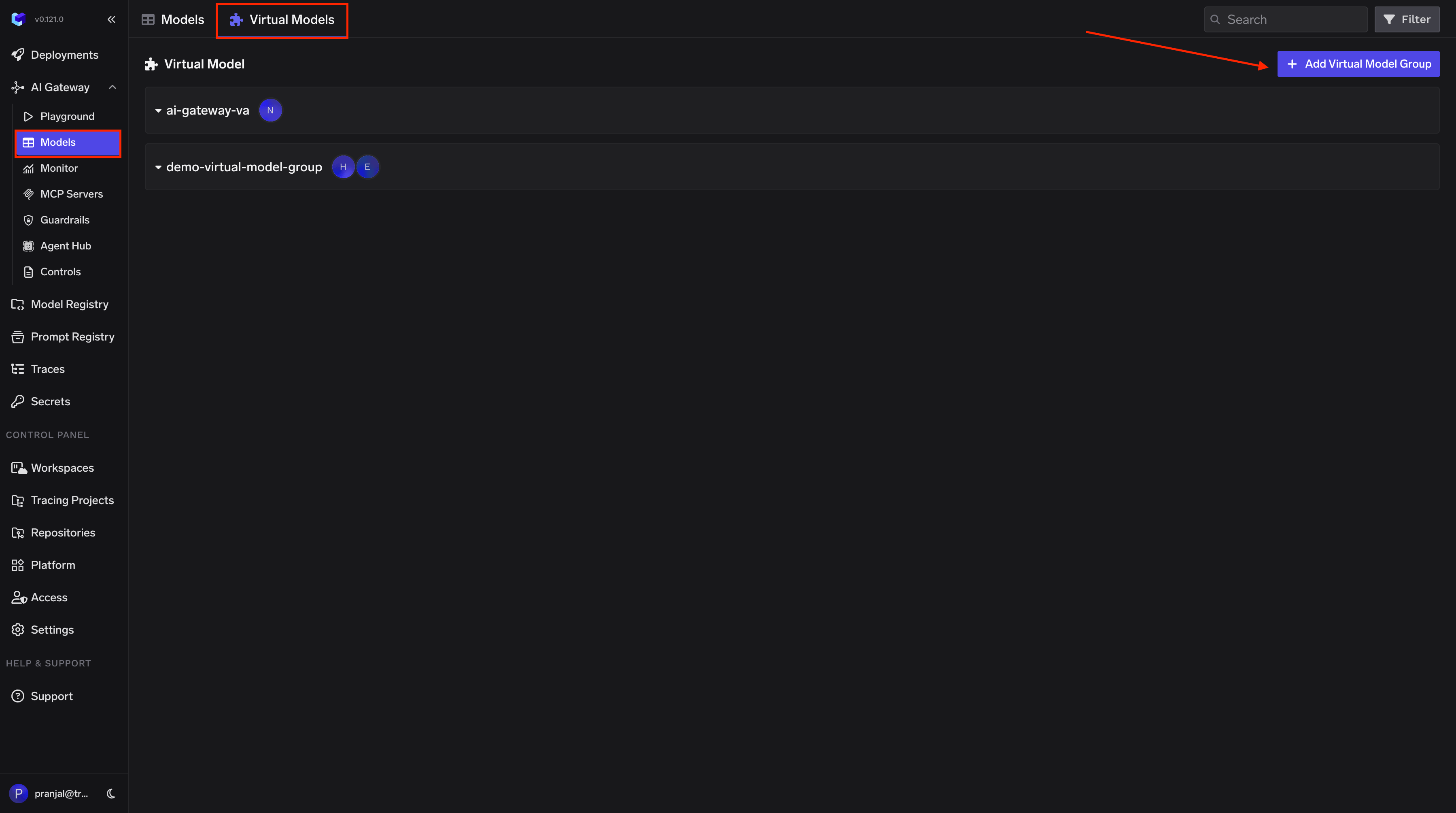

Create a Virtual Model so Codex can use a simple model name that the Gateway maps to your provider. Follow these steps:- Open the Virtual Model / Routing page in the TrueFoundry AI Gateway dashboard

-

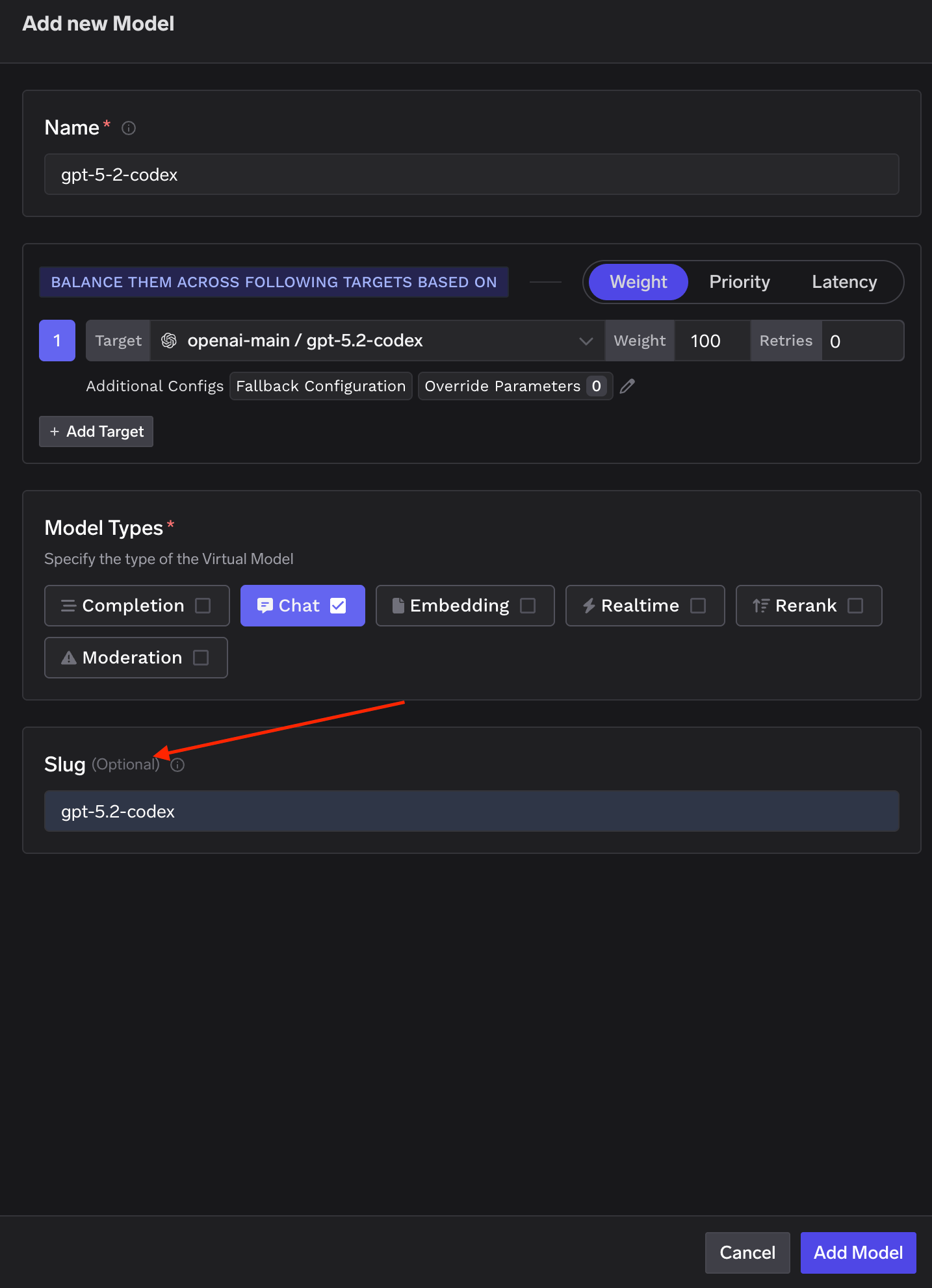

Create a new Virtual Model and add a Slug. This slug is the name you will use in Codex (e.g.

gpt-5.2-codexorgpt-5).Use a slug that matches the actual model ID (e.g.gpt-5.2-codexfor GPT-5.2-Codex). Codex recognizes these IDs and enables the right features (e.g. thinking tokens) -

Set the target to the fully qualified model name (e.g.

openai-main/gpt-5.2-codex). You can use one target at 100% weight or add multiple targets with weights for load balancing.

gpt-5.2-codex and the target is openai-main/gpt-5.2-codex at 100% weight, then when you run codex chat --model gpt-5.2-codex, the Gateway routes that request to openai-main/gpt-5.2-codex.

Use the slug as the model name in Codex (e.g.

--model gpt-5.2-codex). Do not use the fully qualified name (e.g. openai-main/gpt-5.2-codex) in Codex; that can break thinking tokens and other behavior.Usage Examples

Use the virtual model slug you created (e.g.gpt-5.2-codex or gpt-5) with the --model flag so requests go through the TrueFoundry Gateway.

Basic usage

With options

gpt-5.2-codex with whatever slug you set in your Virtual Model.

Understanding Virtual Model Routing

When you use--model <your-slug>, the Gateway routes the request according to that Virtual Model’s targets. With a single target at 100% weight, all traffic goes to that model.

You can also add multiple targets with weights in the Virtual Model (e.g. 70% to one provider, 30% to another). Example: